Picture this. A long mahogany table in a brightly lit room. On one side of the room is a row of floor-to-ceiling windows. The skyline stretches outside. It’s beautiful, but you don’t have time for it because inside the room, around the table, are business people, conducting business. They nod and write notes, listening to a lone figure. That person is standing on the table, wagging their finger as they speak. They are assertive, clearly in charge. But this person is not a person at all. It’s a tiny robot, wearing a tiny shirt and tie.

Somewhere miles away a CEO is wearing a virtual-reality headset, looking out from the robot’s eyes, talking about profit margins, wagging their finger, wearing nothing but a string vest and a pair of underpants.

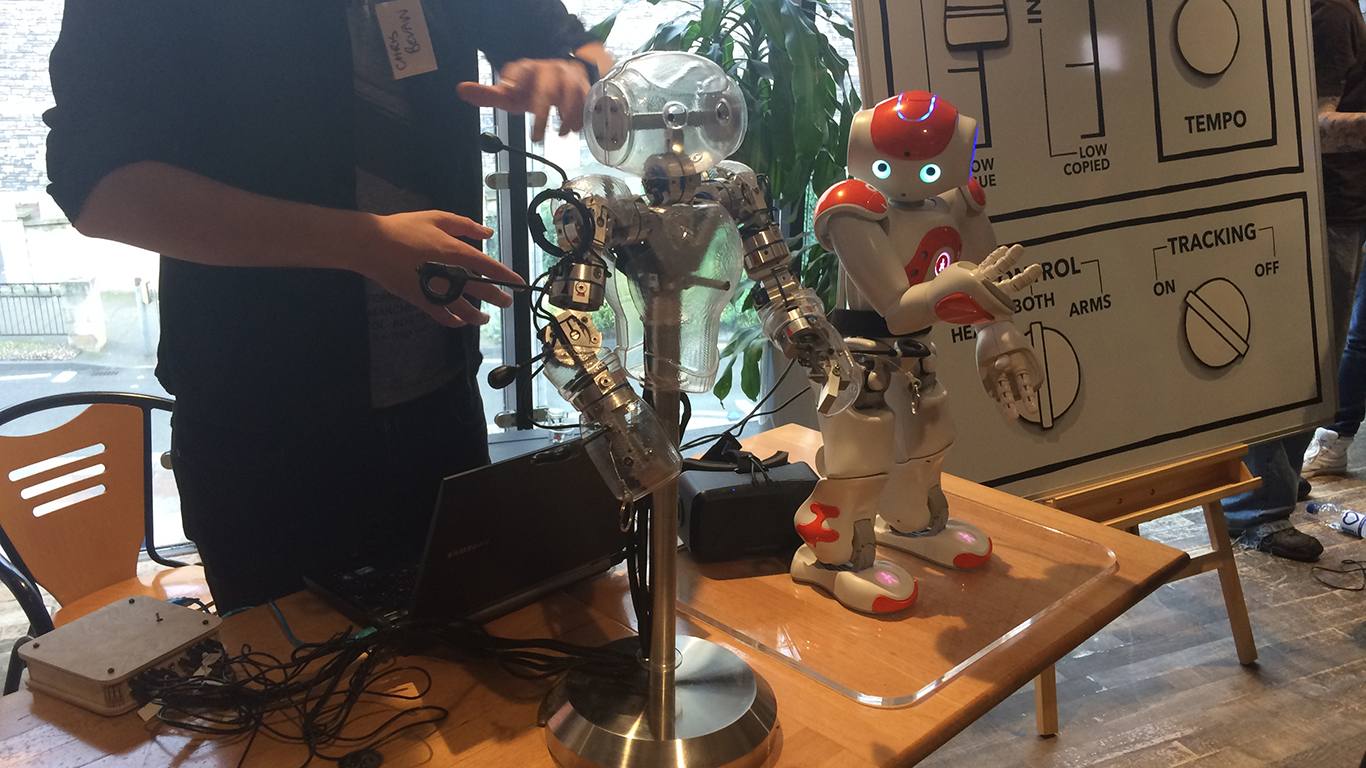

This, at least, is the future as I envision it based on a recent showcase of robotics in Bristol. Hosted in the Watershed digital creativity centre, Being There is a collaborative project funded by the Engineering and Physical Sciences Research Council (EPSRC), involving teams from the Universities of Exeter, Bath, Oxford, Cambridge and the Bristol Robotics Laboratory.

The main thrust of Being There is to investigate interactions between humans and robots in public places – a hazy topic that’s resulted in a heap of smaller projects, touching on everything from empathy for machines to questions about data privacy. One of the main themes across the set is the idea of tele-operated robotics, which boils down to the idea of users being able to control robot avatars. It’s a sci-fi staple, but on the evidence of the showcase it’s beginning to become a reality.

“Public spaces play a valuable role in providing shared understanding and common purpose, but if you are ill or disabled, or live too far away, this can be a barrier to participation,” explained Dr Paul Bremner, from the Bristol Robotics Lab, ahead of the showcase. “The aim of our research is for the robot to be an avatar for a remote person, so it will be taking part in the same activities as those actually present in the venue.”

In the Watershed, Bremner and his team set up an installation involving an Oculus Rift headset, a Microsoft Kinect and one of Aldebaran’s NAO robots. Users don the VR headset and see through the robot’s eyes. Even more impressively, the Kinect and the Oculus Rift’s head tracker capture the user’s body movements, which are then replicated in real-time by the small robot. If someone tilts their head and points as they speak, for example, the robot mimics these actions.

Bremner characterised this as the next stage in telepresence robotics. If you’ve seen footage of Edward Snowden wheeling around on a portable screen, you’ll have some idea of where telepresence currently stands – or more accurately, where it zooms around like a possessed iPad. “Telepresence robots have basically been Skype on a stick,” explained Bremner. “It does give you some advantages over what you’d normally get in a Skype conversation, but what it doesn’t do is give you any idea about body language. You can’t really tell where people are looking, because gaze is distorted when on a flat screen.”

While sensor data can get a robot close to mimicking human gestures, making the machine seem natural with its movements requires a degree of artistry. It’s not enough, basically, to move the robot’s arms at the same time as the human’s. The vast gulf in complexity between a human body and a robot’s limbs means subtle ticks are lost.

So what do you do when you need to make a nonliving object seem alive? You bring in a puppet master.

Pull the string

“Engineers are trying to reinvent the wheel,” said David McGoran, artistic director of robotics and puppetry studio Rusty Squid. “Puppetry has been around for over 2,000 years, and so the art form is highly refined. You look at Pixar or Aardman Animations – within a split second these guys can make you cry, can make you laugh.”

“The puppeteers didn’t copy what they saw, but translated it.”

Collaborating with Bremner, and Chris Bevan from the University of Bath, McGoran tasked a team of puppeteers with watching a human subject and imitating their movements. The puppet they used was a modified NAO robot, fitted with rods that allowed the puppeteers to move its limbs. Whereas the setup with the Oculus Rift and Kinect headset relied on a direct communication between the sensors and a mechanical output, in McGoran’s project a human element of interpretation was introduced. The puppeteers didn’t copy what they saw, but translated it.

The data from the puppeteer’s movements was captured, and is being used to help the robotics team hone in on what works and doesn’t work when communicating body language. Arm movements need to be exaggerated, for example, or they come across a little jittery on such a small machine. McGoran is adamant this attention to physicality will be essential when developing full telepresence robots.

“Movement is the most important form of communication,” he told me. “When we’re looking at each other, the things we remember the most are our breath, our levels of tension, our sudden twitches… all those social and emotional signs. They’ll override anything we’re saying. Those deep levels of communication happen in the body, and I think within the creative industry, dancers, animators and puppeteers know that pretty well.”

The NAO robot is limited in what it is able to do with its body. It can’t shrug, for example, and it can do close to nothing in the way of facial gesturing. Developing more complex and human-like robots will go some way to improving this, but the collaboration between Bevan and Bremner is testament to the importance of bringing disciplines such as dance and puppetry into conversation with engineering and programming. When you have communities with thousands of years’ worth of experience communicating through movement, it makes sense to listen to what they say.

As for the business avatar, as adorable as the idea of a tiny robot in a shirt and tie is, I’d like to hope this technology will have real social uses. Bremner explained how it could be used to help communication for people with disabilities, or those living in remote locations. It’s an honorable set of intentions, although – let’s be honest – it’s not going to be cheap and it’ll need firm backing to stop it winding up as a corporate toy. Looking much further down the road, there’s certainly potential for telepresence robotics to remove barriers to communication over long distances. But whether people will trust a robot to relay the subtly of their body language is a whole other question.

Images: Thomas McMullan, Rusty Squid

Disclaimer: Some pages on this site may include an affiliate link. This does not effect our editorial in any way.