As computer scientists attempt to make machines think and learn like humans, the middle ground is being taken up by researchers attempting to use AI to read our minds.

In the latest breakthrough, scientists at Kyoto University, Japan, have studied deep neural networks (AI) and discovered that computers wield the capacity to at least visualise what humans are thinking.

Before we get ahead of ourselves, it’s worth noting that the technology is nascent, and applies in only optimal conditions. If you recoil at someone’s dubious new choice of profile picture on Facebook, your laptop isn’t going to start registering your distaste and broadcasting it to the world. That being said, the new technology certainly has seemingly impressive – if ominous – potential applications.

The researchers released their findings – that AI could be used to decode thoughts – on BioRxiv (“bio-archive”), an archive and distribution service for unpublished pre-prints in the life sciences industry. This concept is not unprecedented; machine learning has been successfully used in conjunction with MRI scans (magnetic resonance imaging) to produce visual representations of what a person is thinking, albeit only when simple, binary images are involved.

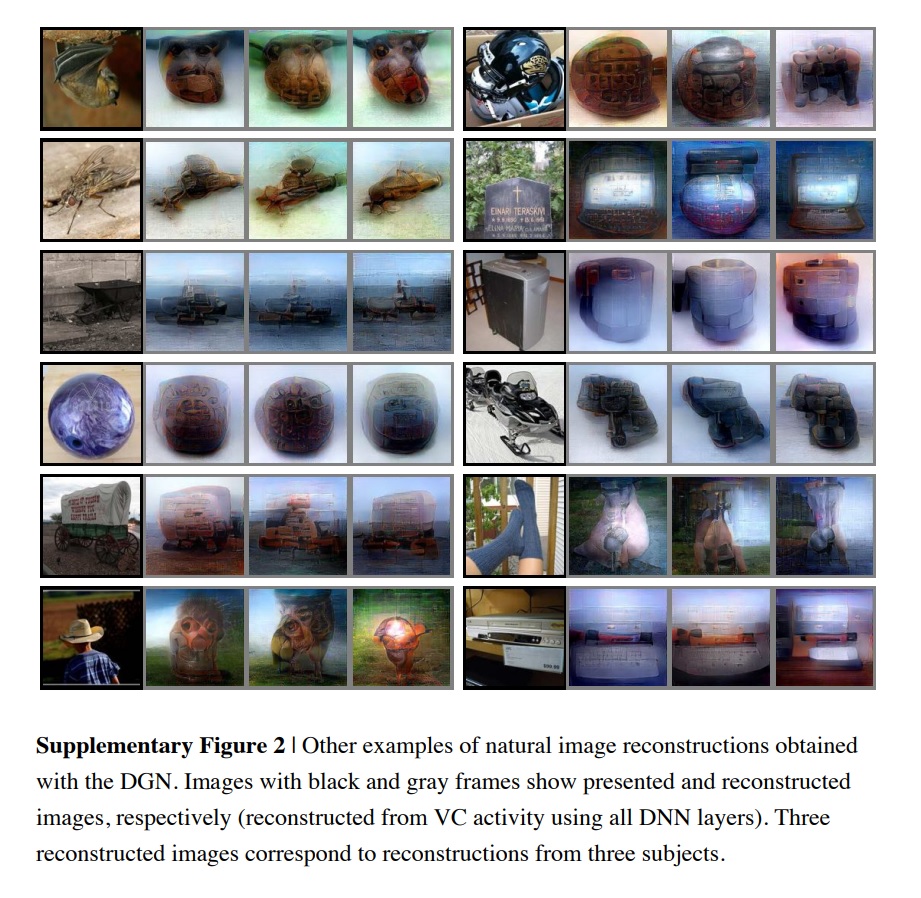

Participants in the study were shown natural images (of, for example, wildlife), geometric shapes and alphabetical letters for varying lengths of time. Brain activity was logged, with a computer “decoding” the information to produce visualisations of the image it was previously shown, and how this image manifested in the brain’s thoughts.

The Japanese team used this to hone new, more advanced methods of “decoding” thoughts using AI; visualisation, for example, now extends to more sophisticated, “hierarchical” images – the team tweeted an example of an image of an owl in the foreground, with a largely concealed, visually ill-defined human lurking behind it. They then cultivated a technique for reconstructing the image on the basis of brain activity.

AI’s “mind-reading” capacity, it turns out, is far more nuanced than we thought. Binary pixels are yesterday’s news, with the Kyoto team proving that AI can detect objects themselves.

One of the scientists who made the discovery, Kamitani, spoke to CNBC about how quickly the techniques are shifting: “Our previous method was to assume that an image consists of pixels or simple shapes. But it’s known that our brain processes visual information hierarchically extracting different levels of features or components of different complexities”.

“These neural networks or AI model can be used as a proxy for the hierarchical structure of the human brain,” he concluded.

What’s more, it was discovered that the technology still worked even when participants thought about a remembered image, not just when it was actively processing that image. This was certainly more difficult, as the below chart shows – relevant visual stimuli produces a much clearer image than trying to evoke a memory does – but the visualisation process was still possible.

And what of potential applications? Mind-reading AI gaining traction and viability isn’t exactly a soothing thought, but it’s not all dystopian doom and gloom; the ostensibly “mind-reading” visualisation technology could allow you to one day create images or art by simply imagining it, while those suffering from hallucinations could have them visualised, helping to ameliorate psychiatric care.

There you have it. Mind-reading AI is becoming more sophisticated by the week. Industries worldwide, from the creative to the medical, are poised to prosper from the new technology which, as ever, warrants vigilant monitoring and robust safeguards.

Image credit: Guohua Shen, Tomoyasu Horikawa, Kei Majima and Yukiyasu Kamitani, ‘Deep image reconstruction from human brain activity’

Disclaimer: Some pages on this site may include an affiliate link. This does not effect our editorial in any way.